New

Reporting Bugs in Status Desktop 2.0

Make the

jump to web3

Use the open source, decentralised crypto communication super app.Betas for Mac, Windows, Linux

Alphas for iOS & Android

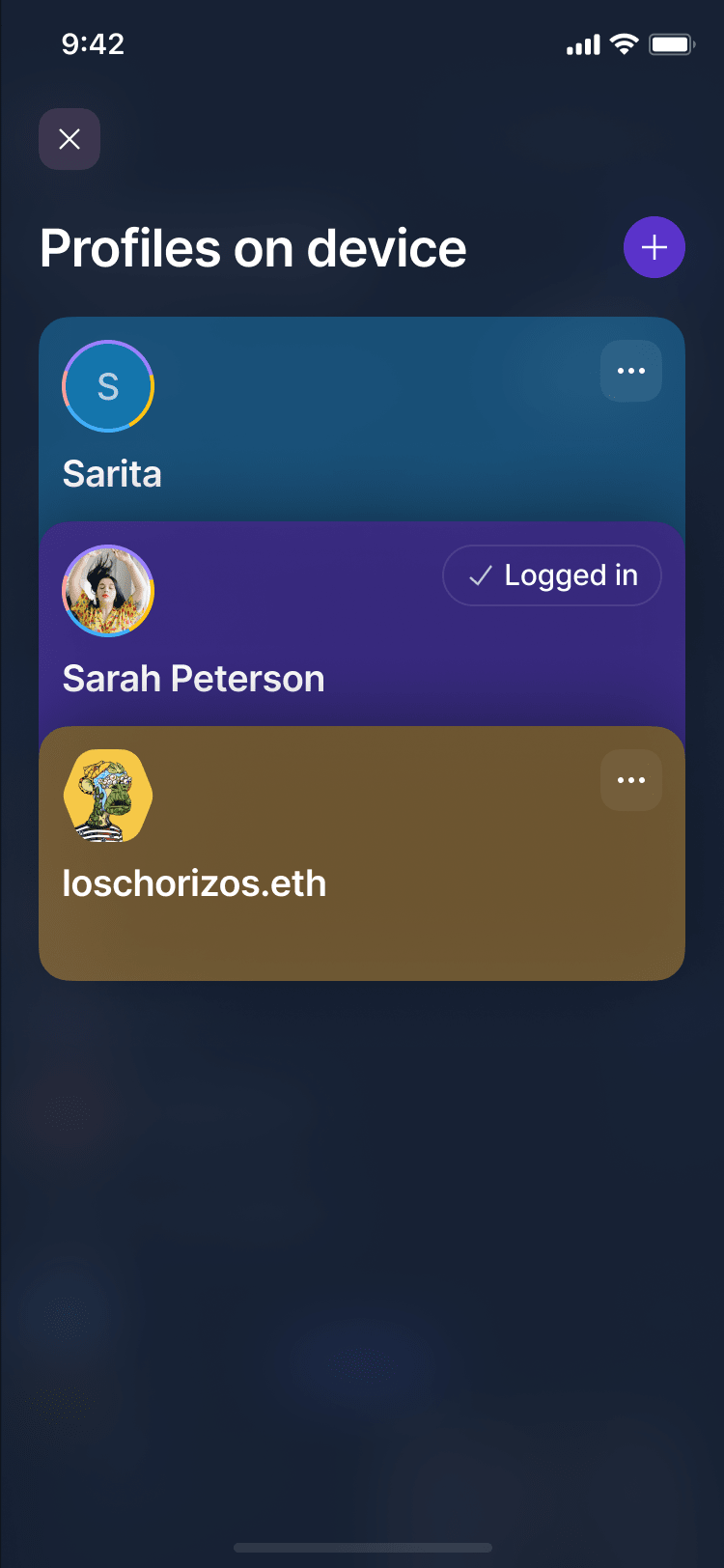

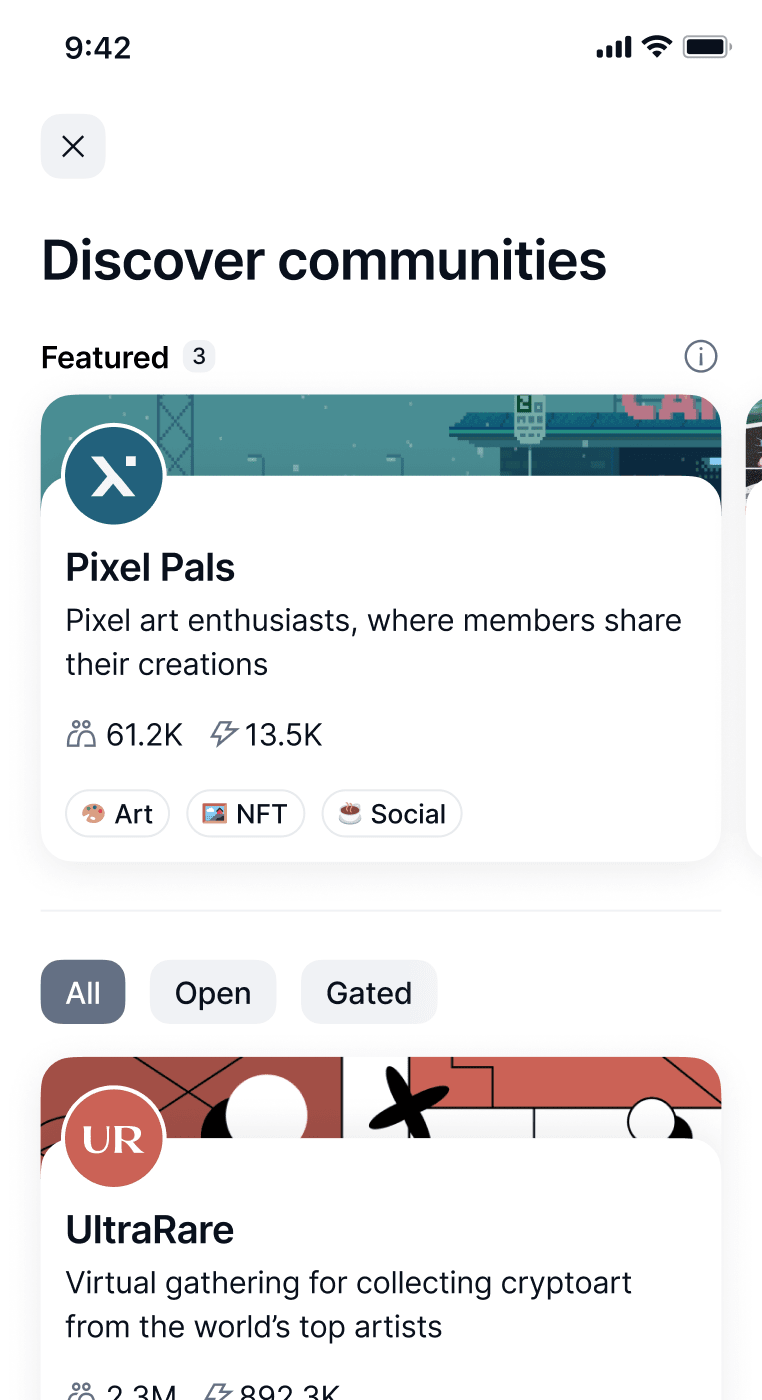

Communities

Discover your community

Find your tribe in the metaverse of truly free Status Communities.

Explore the universe of self-sovereign communities.

Decentralised and permissionless.

Access token-gated channels. Become eligible for airdrops.

Create community

Take back control

Don’t give Discord or Telegram power over your community.Messenger

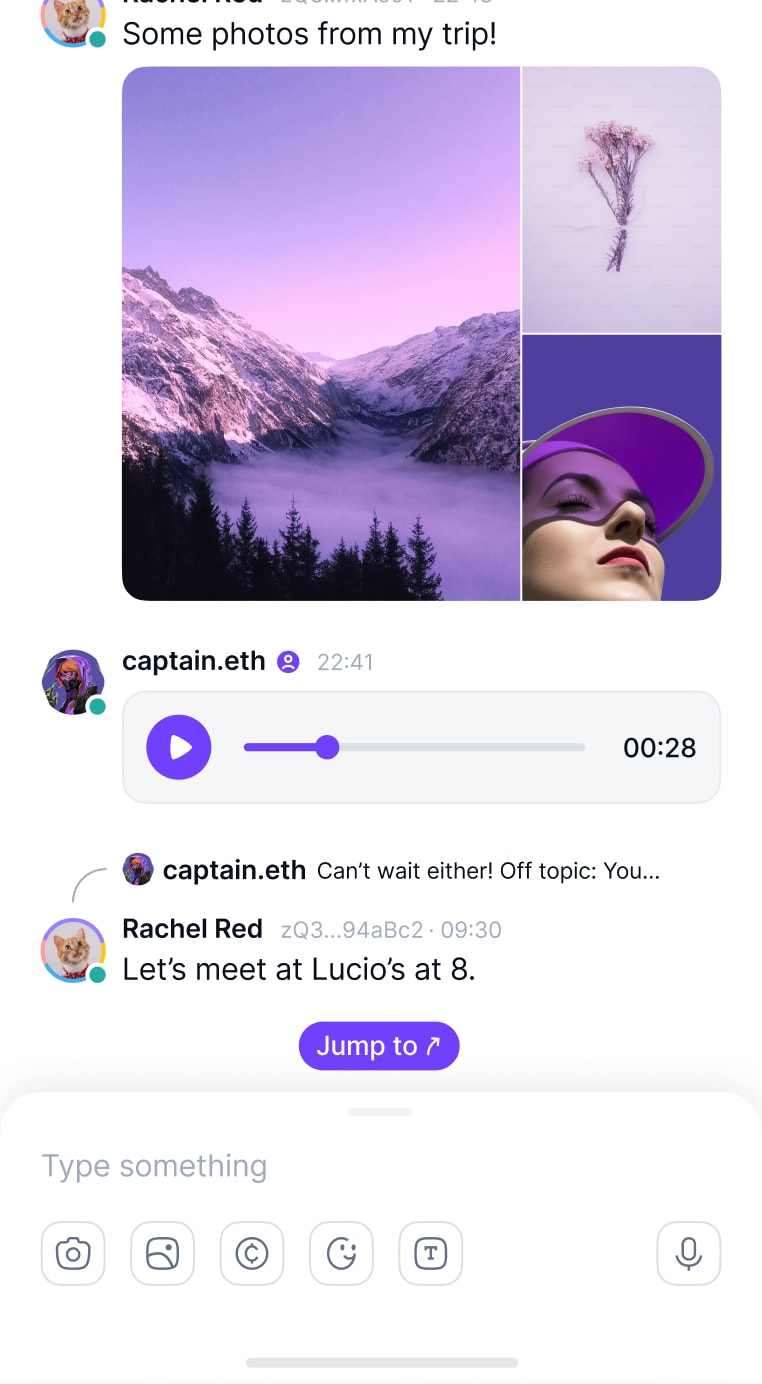

Chat privately with friends

Protect your right to free speech with decentralised messaging, metadata privacy and e2e encryption.

Create and join unstoppable group chats.

With perfect forward secrecy

It’s the internet.

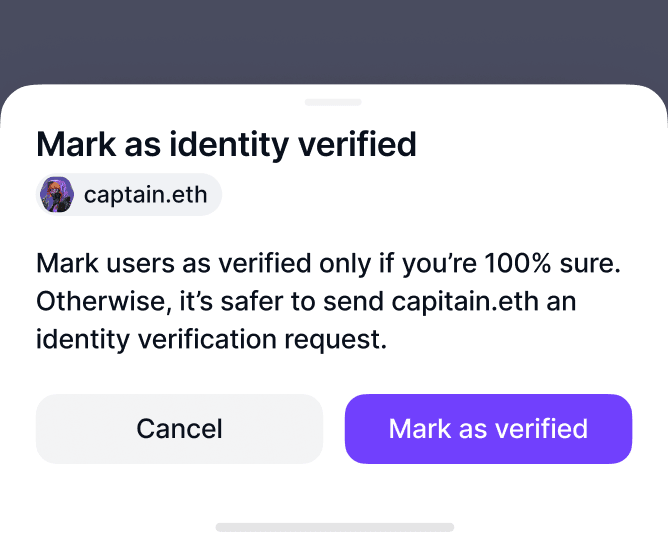

Verify your contacts.

Send crypto to your friends directly from chat.

Wallet

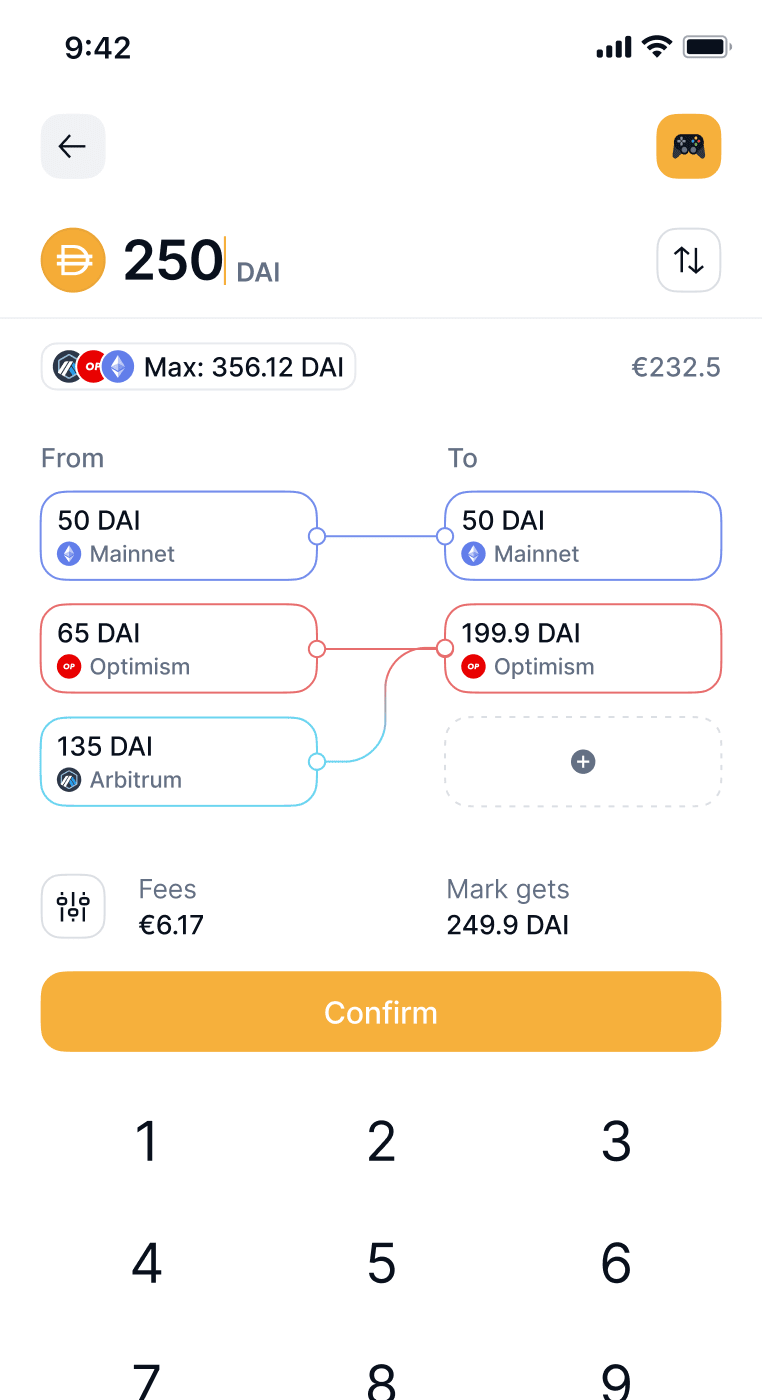

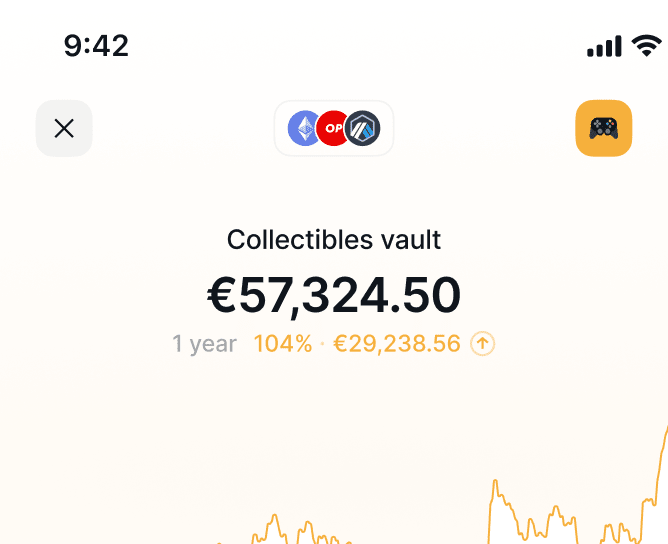

The future is multi-chain

L2s made simple - send and manage your crypto easily and safely across multiple networks.

Send with automatic bridging. No more multi-chain hassle.

Fully self-custodial. Nobody can stop you from using your tokens.

See how your total balances change over time, in fiat.

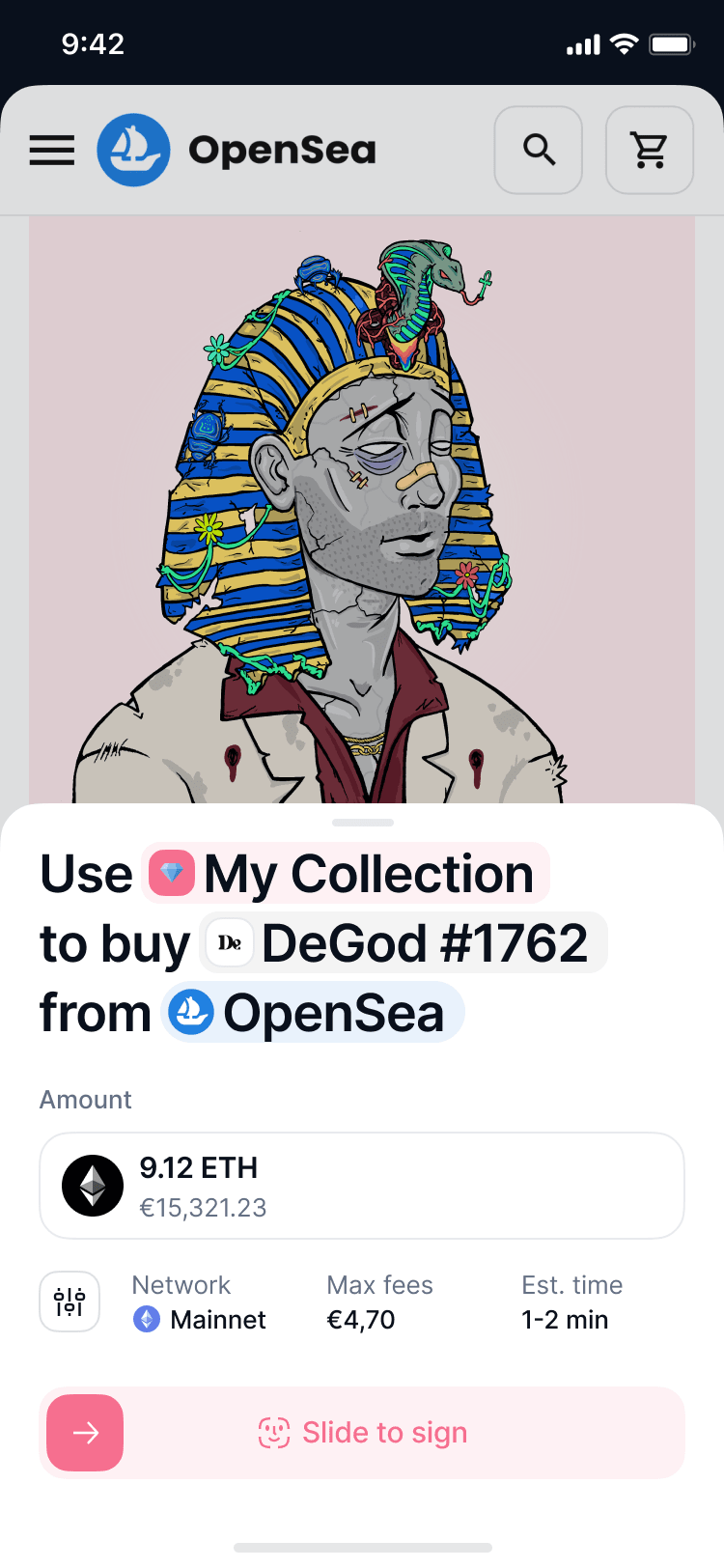

dApp Browser

Explore dApps

Interact trustlessly with web3 dApps, DAOs, NFTs, DeFi and much more.

Be free from tracking and data collection.

Omnichain dApp connections. So you don’t have to pick chains.

open source, decentralised crypto communication super app

open source, decentralised crypto communication super app

Like an operating system, whatever you’ve recently been doing is just a few taps away. Go from chatting with a friend to an account without having to navigate your way back.

Status is better with Keycard

Decentralising the future

Building apps to uphold human rights, protect free speech & defend privacy.A token by and for Status

Participate in Status’ governance and help guide development with SNT.Stay up to date

Follow development progress as we build a truly decentralised super app.

Be unstoppable

Use the open source, decentralised crypto communication super app.Betas for Mac, Windows, Linux

Alphas for iOS & Android

Alphas for iOS & Android